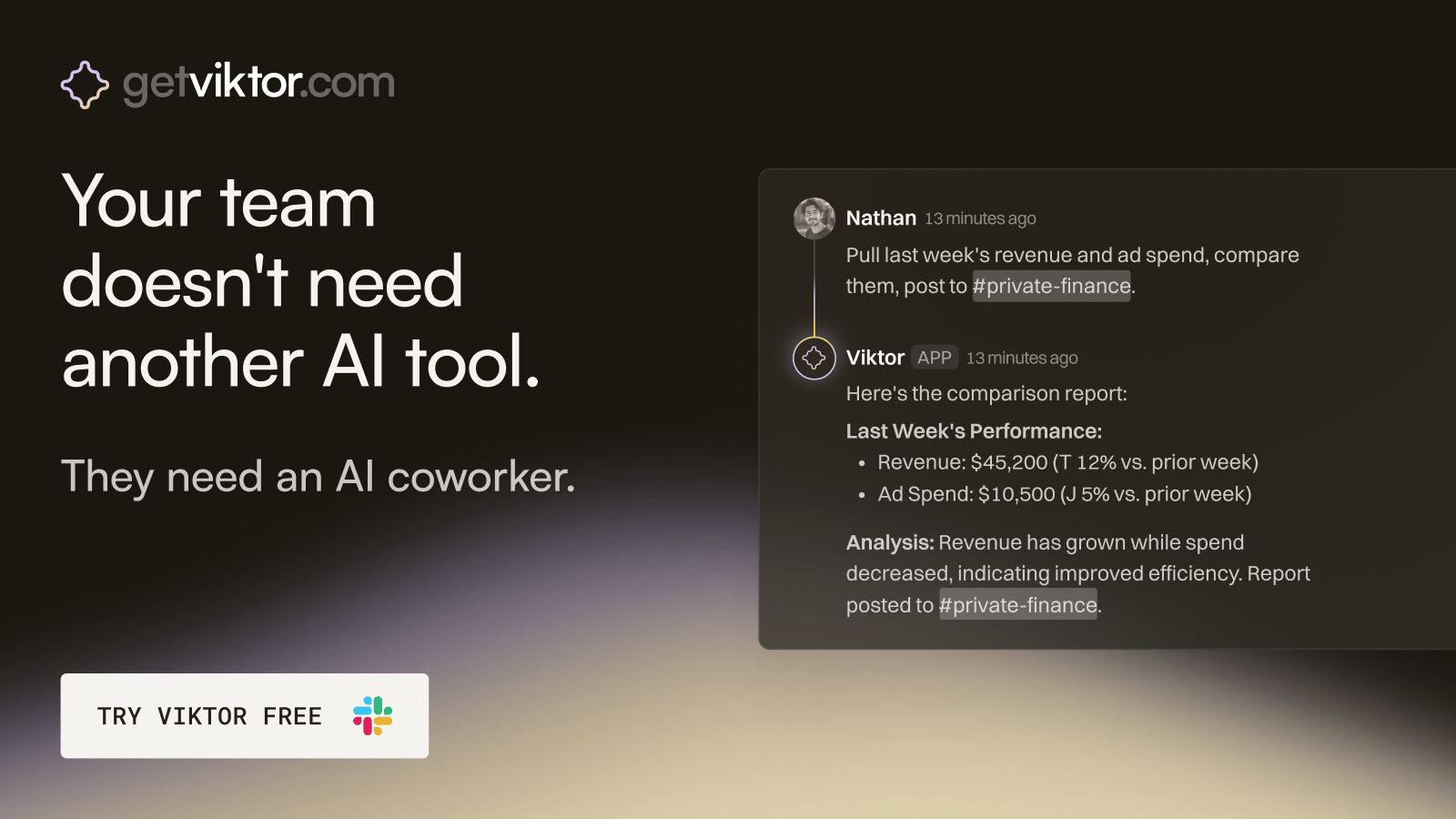

The ops hire that onboards in 30 seconds.

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

You know more than you think you do. The problem is your knowledge is scattered across 14 apps, a handful of notebooks you do not open anymore, 200 browser tabs, and a Notion database that started organized and gradually became a digital junk drawer.

That knowledge, your frameworks, your client wins, your hard-won lessons, your industry patterns, is the most valuable intellectual asset you own. And right now, most of it is effectively inaccessible. You cannot search it, you cannot use it to train anything, and you certainly cannot deploy it at scale.

A personal AI knowledge base changes that. It turns your accumulated expertise into a retrieval engine, something you can query, something that can surface the right information at the right moment, and something that gets smarter the more you feed it.

Here is how to build one that actually works.

What a Personal AI Knowledge Base Is and Is Not

Let us be precise about what we are building so we do not spend time on something you will set up in a weekend and never open again.

A personal AI knowledge base is not just a Notion wiki. It is not just a folder of PDFs. It is not a chatbot trained on your content that you demo once and forget about.

It is a structured, searchable, queryable system that combines your existing knowledge assets, including notes, transcripts, SOPs, articles, and frameworks, with a retrieval layer powered by AI that lets you surface relevant information in response to natural language queries. When you ask it what has worked best for converting cold traffic in the home improvement niche, it does not search by keyword. It understands the question, searches semantically, and pulls the most relevant chunks from everything you have fed it.

That distinction, between keyword search and semantic retrieval, is what makes this different from the filing systems you have already abandoned.

Step 1: Audit and Inventory Your Existing Knowledge Assets

Before you build anything, take an inventory of what you actually have. Most people are sitting on more usable intellectual property than they realize.

Your inventory should cover: call recordings from client sessions, discovery calls, and sales conversations; written content including newsletter editions, blog posts, social posts, and published frameworks; internal documents including SOPs, onboarding materials, and training content; personal notes from books, courses, and conferences; email threads that contain genuinely useful insights; and any proprietary frameworks, models, or processes you have developed over time.

Do not worry yet about format or quality. Just make the list. The goal is to see the full surface area of what you are working with. Most people doing this exercise for the first time are surprised by how much they have produced and how little of it is currently accessible.

Step 2: Choose Your Architecture

There are two core approaches to building a personal knowledge base, and the right one depends on your technical comfort level and how you want to interact with the system.

Option A: Notion with AI Plugin (Low Technical Lift)

If you want to start fast and stay inside a tool you already use, Notion with the AI features enabled plus a tool like Mem.ai or Reflect gives you semantic search across your notes database. You paste or upload your content, the tool indexes it, and you can ask natural language questions against your library. This is not as powerful as a custom RAG system, but it is deployable in a day and useful immediately.

Option B: Custom RAG System (Higher Capability)

RAG stands for Retrieval-Augmented Generation. This is the architecture that powers enterprise knowledge systems and it is now accessible to individuals through tools like LlamaIndex, Langchain, or platforms like Relevance AI and Dust.tt. You upload your documents, they get converted into vector embeddings, and when you query the system, it retrieves the most semantically relevant chunks and passes them to a language model to generate a synthesized response.

This approach is more powerful, handles larger knowledge bases better, and gives you more control over how retrieval works. The setup requires more effort, expect a weekend to a week of configuration, but the long-term payoff is significantly higher.

For most operators building this for the first time, starting with Option A and migrating to Option B when the limitations become frustrating is the right call.

Step 3: Process and Prepare Your Content

Raw content does not go in as-is. You need to do three things before uploading anything.

First, clean for signal-to-noise. A call transcript that is 40 percent filler phrases, repeated questions, and off-topic tangents is going to pollute your retrieval. Run transcripts through a summarization pass first. Pull out the key insights, frameworks, decisions, and lessons. Upload the cleaned version.

Second, add metadata. The value of a knowledge base scales with how well you can filter and slice it. Tag every document with category, date, client type, topic area, and any relevant keywords. This metadata does not just help search. It helps the retrieval layer understand what it is looking at.

Third, chunk deliberately. If you are using a RAG architecture, how you chunk your documents matters enormously for retrieval quality. Chunking by conceptual unit, one idea per chunk with enough context in each chunk to be standalone, consistently outperforms naive line-length chunking. Most knowledge base tools handle this automatically, but knowing the principle helps you structure your content better before you upload.

Step 4: Build the Retrieval Interface

The knowledge base is only as useful as your ability to interact with it. You need an interface that makes querying feel natural, fast, and something you will actually do.

The simplest interface is a chat window. Something like the Claude Projects feature, which lets you upload documents and have conversations about them. For a personal knowledge base with hundreds of documents, you will want something more robust: a custom GPT with your knowledge base attached, a Dust.tt deployment, or a Relevance AI agent that sits in front of your vector database.

The interface you build should support three types of queries: recall, such as what I write about pricing strategy for agencies; synthesis, such as what are the common patterns in my most successful client engagements; and generation, such as using my existing frameworks, to draft a response to this client situation.

If your system can handle all three, you have a real tool. If it can only handle the first one, you have a fancy search bar.

Step 5: Build the Intake Pipeline

A knowledge base is only as good as what you feed it, which means the most important thing you build is not the retrieval system. It is the pipeline that keeps the system current.

Every call you record should automatically be transcribed and added. Every article you publish should be automatically ingested. Every significant email thread should have a one-click path into the system. Every framework you develop should live there.

The tools to build this: Otter.ai or Fireflies.ai for automatic transcription of calls, Zapier or Make.com to route transcripts and published content into your knowledge base, and a simple browser bookmarklet or extension for ad hoc additions when you find something worth capturing.

Set a 10-minute Friday ritual to review what went in during the week and tag anything that needs metadata cleaning. That is the maintenance.

What This Actually Unlocks

Once your personal knowledge base is alive and fed, something changes. You stop losing your own thinking. You stop reinventing frameworks you already built. You stop forgetting what worked with a specific type of client or in a specific market situation.

More practically: when you are writing a proposal, you can query your past wins in that vertical and pull the language that was converted. When you are building a new offer, you can surface every insight you have ever captured about that audience's pain points. When you are training a team member, you can export a synthesized briefing document from your own intellectual archive in minutes.

The knowledge base becomes a multiplier on every hour you have worked in the past. All that experience locked in inaccessible archives suddenly becomes queryable, usable, and deployable.

That is what working while you sleep actually means in practice. Not passive income. Your accumulated expertise, available, searchable, and generative, without you having to be present to access it.

Build it this month. You will stop wondering why you waited this long.

The Maintenance Reality

One of the reasons people do not build knowledge bases is the assumption that maintaining one will become another full-time job. That assumption is based on the wrong mental model. A well-built knowledge base does not require active curation on your part. It requires a well-designed intake pipeline and a periodic review ritual.

The intake pipeline, once configured, runs without your attention. Calls get transcribed and ingested. Published content gets routed automatically. The only things that require manual addition are the one-off documents, frameworks, and email threads that fall outside your standard workflows, and those typically take less than five minutes each to add.

The periodic review ritual is a 30-minute monthly session where you check what came in, verify that tagging is accurate, and identify anything that should be removed because it is outdated. For most operators, this 30-minute monthly session is a net positive in terms of how they think about their business, because reviewing what you have produced and learned has independent strategic value beyond the maintenance function.

What to Query First

When your knowledge base is live, the fastest way to build trust in the system is to start with queries that have answers you already know. Ask it about a topic where you have written extensively. Ask it to surface the patterns in how you have handled a specific type of client situation. Ask it to find the framework you built for a problem you solved two years ago that you can barely remember.

When the system surfaces the right answer, accurately and quickly, something shifts. You stop thinking of it as a tool you built and start thinking of it as a resource that actually works. That shift in perception is what drives consistent use, and consistent use is what makes the system increasingly valuable over time.

The knowledge base compounds in usefulness the more you feed it and the more you query it. The queries themselves help you understand what is in the system and what is missing, which refines how you think about the intake pipeline going forward.

After six months of consistent use, operators who have built this kind of system consistently describe the same experience: they feel smarter. Not because their intelligence changed. Because their accumulated knowledge became accessible and deployable rather than buried and forgotten. That is the real payoff.

THE AI NEWSROOM | JORDAN HALE | AINEWSROOMDAILY.COM